VR content creation is going meta, my friends.

The concept of creating VR content within a virtual environment isn’t new. From election-themed battles built in the HTC Vive using Tilt Brush to drag-and-drop game creation in VR using Unity’s Carte Blanche, creating VR content within a virtual space is supposed to make it easier to conceptualize and build out VR worlds.

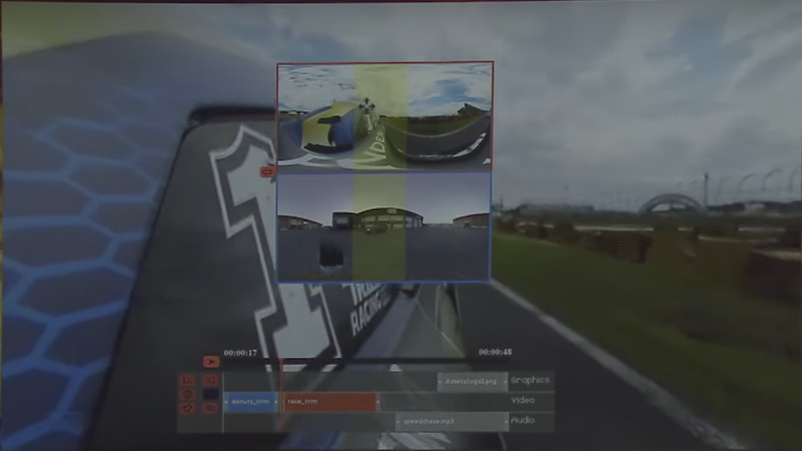

Well, that VR-within-VR approach is making its way to 360 video with Adobe’s Project Clover. Announced at Adobe’s MAX Creative Conference in early November, the special version of Premiere Pro allows users to edit their VR videos from within an Oculus Rift headset.

Typically, editors do their best to edit 360 videos based on rectangular projections of VR environments displayed on the flat screens of our desktops and laptops. But those projections distort your video, making it difficult to understand exactly what’s happening and what it will look like for end users.

The industry knows this is a problem. That’s why there are plugins that allow creators to preview their videos in a headset from within editing environments. But, it isn’t the most seamless solution.

“If we want to really see what’s going on [in our 360 video], we’ve got to put the headset on, then we’ve got to look around, and if we see something we don’t like, we have to take the headset off and go back to Premiere and change it,” said Adobe’s Stephen DiVerdi. “Then, to see what the change looks like, you have to put the headset back on. After you do this for a little while, it gets pretty tiring.”

With Project Clover, users edit their videos with the Oculus controllers from within the Rift headset. Although the interface is devoid of many of Premiere’s features, it does show a simple timeline over your VR video with a handful of editing options.

One key feature DiVerdi showed on stage is Project Clover’s rotational alignment tool, which allows users to orient the starting point from shot to shot.

“You might have some action that’s important before the cut and some different action that’s important after the cut, and you want them to both be in the same part of the scene so your audience doesn’t lose what they’re looking at,” DiVerdi said. Without that direction, he said, the viewer might not know where to look and could miss a part of your story.

Being able to direct the viewer’s experience in VR has been a continual challenge for journalists. We’re used to being able to select what people see and structure our own stories. With the total freedom of a VR environment, we lose some of that power.

In an attempt to direct our audience, we narrate, we use arrows, we insert text. But we can also offer some guidance by simply choosing what they see first in every shot.

“Then they’re not looking around wondering, ‘Where am I supposed to be looking right now?’ and meanwhile, they’re missing part of your story that’s happening in the background,” DiVerdi said.

Adobe is also working on a solution to allow users to properly place audio in a VR environment for a true 3D audio experience—which can also be used to help guide users—but offered no further details for now.

Adobe hasn’t said whether (or when) the test project will become an actual product—and the interface has a ways to go—but it could make it easier for editors to visualize VR videos in real time down the road. As one of Adobe’s emcees said, “Editors already live in a hole, and when they come out it’s like they’ve lived in a different world.” If Project Clover gets off the ground, editing really will feel like an otherworldly experience.

For more information about Adobe products and projects, visit the Adobe website.