OpenAI Sora is an AI text-to-video model that has achieved incredibly realistic video that is hard to tell it is AI. It’s very life-like but not real. I think we have just hit the beginning of some truly powerful AI-generated video that could change the game for stock footage and more. Below are two examples of the most realistic AI prompt-generated videos I have seen.

Prompt:

A stylish woman walks down a Tokyo street filled with warm glowing neon and animated city signage. She wears a black leather jacket, a long red dress, and black boots, and carries a black purse. She wears sunglasses and red lipstick. She walks confidently and casually. The street is damp and reflective, creating a mirror effect of the colorful lights. Many pedestrians walk about.

Prompt:

Drone view of waves crashing against the rugged cliffs along Big Sur’s garay point beach. The crashing blue waters create white-tipped waves, while the golden light of the setting sun illuminates the rocky shore. A small island with a lighthouse sits in the distance, and green shrubbery covers the cliff’s edge. The steep drop from the road down to the beach is a dramatic feat, with the cliff’s edges jutting out over the sea. This is a view that captures the raw beauty of the coast and the rugged landscape of the Pacific Coast Highway.

Prompt:

Animated scene features a close-up of a short fluffy monster kneeling beside a melting red candle. The art style is 3D and realistic, with a focus on lighting and texture. The mood of the painting is one of wonder and curiosity, as the monster gazes at the flame with wide eyes and open mouth. Its pose and expression convey a sense of innocence and playfulness, as if it is exploring the world around it for the first time. The use of warm colors and dramatic lighting further enhances the cozy atmosphere of the image.

Sora can generate videos up to a minute long while maintaining visual quality and adherence to the user’s prompt. OpenAI Sora states they are teaching AI to understand and simulate the physical world in motion, with the goal of training models that help people solve problems that require real-world interaction.

Prompt:

Tour of an art gallery with many beautiful works of art in different styles.

Sora is not available to the masses yet. It’s only available to “red teamers” to assess critical areas for harms or risks. They are also granting access to a number of visual artists, designers, and filmmakers to gain feedback on how to advance the model to be most helpful for creative professionals.

Prompt:

Historical footage of California during the gold rush.

The sample above is a strong use case. Depicting a time in the past, like a California town during the gold rush with an aerial flyover. It looks amazing.

Open AI states the model deeply understands language, enabling it to accurately interpret prompts and generate compelling characters that express vibrant emotions. Sora can also create multiple shots within a single generated video that accurately persist characters and visual style.

One question I have is how much AI-generated video can be changed or edited, like the person’s hair color or having the subject walk faster while maintaining the other AI-generated images in a scene. Writing a simple descriptive Prompt is one thing, but being able to control all the intricacies of a scene is another.

Prompt:

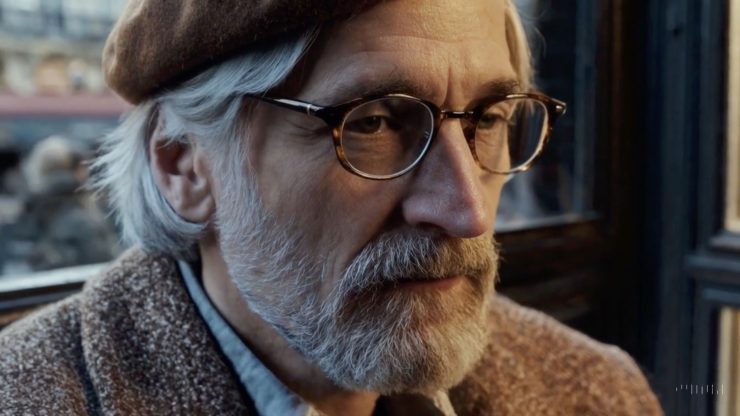

An extreme close-up of an gray-haired man with a beard in his 60s, he is deep in thought pondering the history of the universe as he sits at a cafe in Paris, his eyes focus on people offscreen as they walk as he sits mostly motionless, he is dressed in a wool coat suit coat with a button-down shirt , he wears a brown beret and glasses and has a very professorial appearance, and the end he offers a subtle closed-mouth smile as if he found the answer to the mystery of life, the lighting is very cinematic with the golden light and the Parisian streets and city in the background, depth of field, cinematic 35mm film.

The current model has weaknesses. It may struggle with accurately simulating the physics of a complex scene, and may not understand specific instances of cause and effect. For example, a person might take a bite out of a cookie, but afterward, the cookie may not have a bite mark.

Prompt:

Archeologists discover a generic plastic chair in the desert, excavating and dusting it with great care.Prompt: Archeologists discover a generic plastic chair in the desert, excavating and dusting it with great care.

Weakness:

In this example, Sora fails to model the chair as a rigid object, leading to inaccurate physical interactions.

The model may also confuse spatial details of a prompt, for example, mixing up left and right, and may struggle with precise descriptions of events that take place over time, like following a specific camera trajectory.

Prompt:

A litter of golden retriever puppies playing in the snow. Their heads pop out of the snow, covered in.

Several samples on OpenAI SORA are truly impressive and worth a look. It’s incredible and scary as the realism is impressive, and the animation prompts are stunning, too. All the samples seem to be at higher frame rates around 60 fps which will help smooth the flaes a bit. I would like to see 30 fps and 24 fps samples.

I know we in the biz are concerned that AI will replace creative jobs like videographers, photographers, editors and filmmakers. I have to say it looks scary out there. All it takes is an idea and a solid prompt. I do feel that AI is basically stealing content that exsist already that has been posted on the internet. This is, in a lot of ways, copywrite infringement, and we will see how the courts will handle this issue, but the cat is out of the bag, and there is no turning back at this point.

The next stage for AI prompt filmmaking is dialogue-based scenes. So far, these examples are more like b-roll.