Sony has announced the World’s First Intelligent Vision Sensors with AI Processing Functionality. However, don’t get too excited, these sensors are 1/2.3-type and they are meant for use in AI-equipped cameras for applications in the retail and industrial equipment industries.

So why am I writing about it then? Well, any new sensor technology is interesting, and just because these particular sensors aren’t intended for professional video use they do give us a window into the possible direction sensors could go.

You might be asking what exactly is AI processing and what can it do by being placed on a sensor? That is a good question. Without trying to get to technical it allows the camera that is using the sensor to output complex metadata, real-time tracking, and images, all at the same time. For instance, a camera equipped with one of these sensors could be installed in a business or security environment and could not only be capturing and streaming images, but also performing complex AI tasks. These AI tasks could be varied and wide. It’s best to think of theses sensors as smart sensors that do way more than just capturing images.

In the future, there is no reason why sensors with AI processing functionality couldn’t find their way into cameras that we may one day use. The possibilities are endless as to what information could be processed and gathered while you were filming. They could be used for VFX, motion capture applications as well as a variety of other tasks.

What has Sony done?

Sony has placed AI processing functionality on the image sensor itself. This allows for high-speed edge AI processing and extraction of only the necessary data, which, when using cloud services, reduces data transmission latency, addresses privacy concerns, and reduces power consumption and communication costs.

The spread of IoT has resulted in all types of devices being connected to the cloud, making commonplace the use of information processing systems where information obtained from such devices is processed via AI on the cloud. The Internet of things (IoT) is a system of interrelated computing devices, mechanical and digital machines provided with unique identifiers (UIDs) and the ability to transfer data over a network without requiring human-to-human or human-to-computer interaction.

With the increasing volume of information handled in the cloud companies face problems such as increased data transmission latency hindering real-time information processing; security concerns from users associated with storing personally identifiable data in the cloud; and other issues such as the increased power consumption and communication costs cloud services entail.

So how are these problems being addressed?

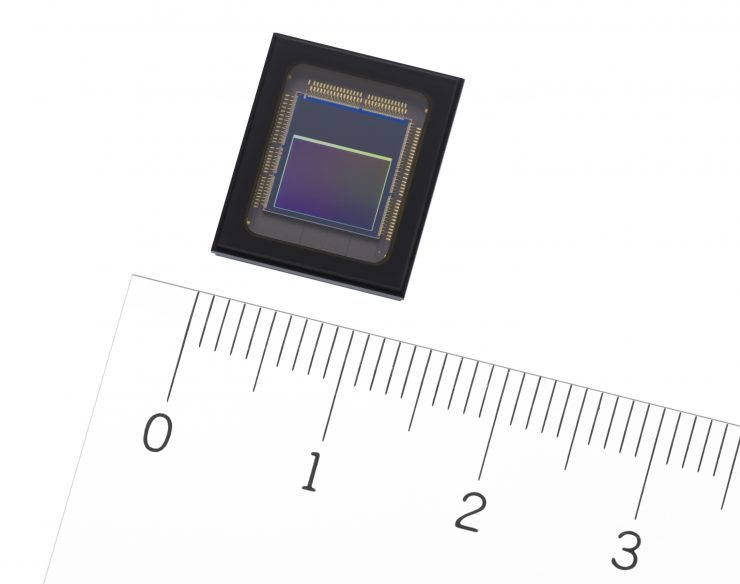

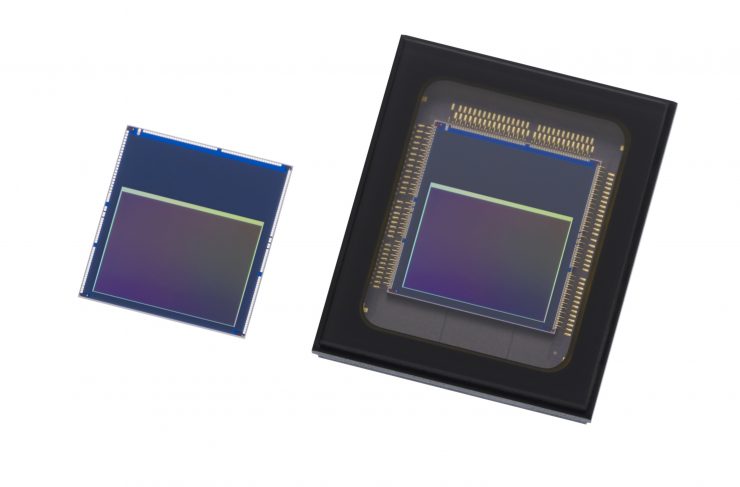

Enter the IMX500 & IMX501

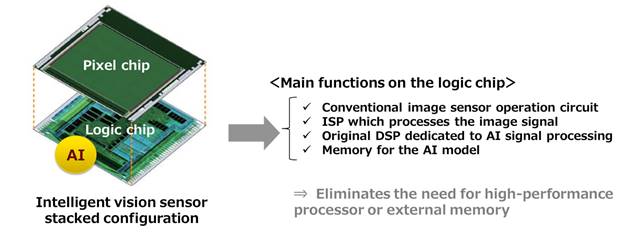

The new sensor products feature a stacked configuration consisting of a pixel chip and logic chip.

The pixel chip is back-illuminated and has approximately 12.3 effective megapixels for capturing information across a wide-angle of view. In addition to the conventional image sensor operation circuit, the logic chip is equipped with Sony’s original DSP (Digital Signal Processor) dedicated to AI signal processing, and memory for the AI model. This configuration eliminates the need for high-performance processors or external memory, making it ideal for edge AI systems.

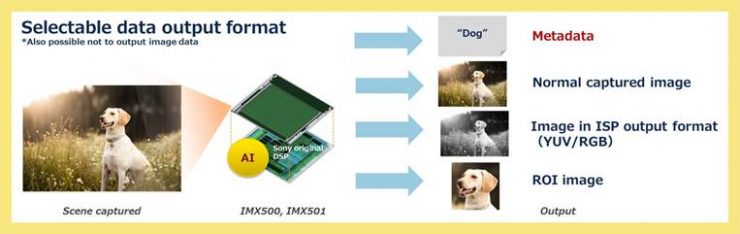

Signals acquired by the pixel chip are run through an ISP (Image Signal Processor) and AI processing is done in the process stage on the logic chip, and the extracted information is output as metadata, reducing the amount of data handled. Ensuring that image information is not output helps to reduce security risks and address privacy concerns. In addition to the image recorded by the conventional image sensor, users can select the data output format according to their needs and uses, including ISP format output images (YUV/RGB) and ROI (Region of Interest) specific area extract images.

How do they differ from a normal sensor?

When a video is recorded using a conventional image sensor, it is necessary to send data for each individual output image frame for AI processing, resulting in increased data transmission and making it difficult to deliver real-time performance. The new sensor products from Sony perform ISP processing and high-speed AI processing (3.1 milliseconds processing for MobileNet V1*2) on the logic chip, completing the entire process in a single video frame. This design makes it possible to deliver high-precision, real-time tracking of objects while recording video.

*2 MobileNet V1: An image analysis AI model for object recognition on mobile devices.

Users can write the AI models of their choice to the embedded memory and can rewrite and update it according to its requirements or the conditions of the location where the system is being used. For example, when multiple cameras employing this product are installed in a retail location, a single type of camera can be used across different locations, circumstances, times, or for different purposes.

For example, when installed at the entrance to a facility it can be used to count the number of visitors entering the facility. If it was installed on the shelf of a store it can be used to detect stock shortages. If it was on the ceiling it can be used for heat mapping store visitors (detecting locations where many people gather). Furthermore, the AI model in a given camera can be rewritten from one used to detect heat maps to one for identifying consumer behavior, and so on.

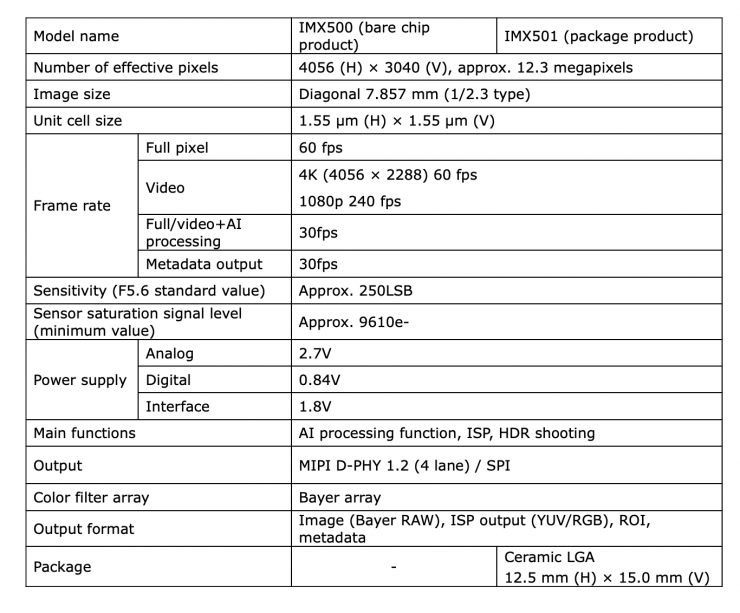

Key Specifications

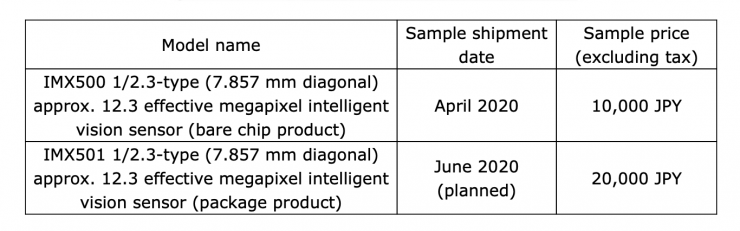

The sensors will retail for the following prices and are expected to be available at the times listed below:

These prices work out to be roughly around $93 USD and $185 USD.