Facebook’s move into 360° video continues, this time by making the technology it has been working on open source via GitHub. It’s an interesting development, and follows the company’s approach to their data centre hardware and even the code that is used to build Facebook itself. It also offers an insight into what sort of computing power is going to be needed to be able to deal with 360° content as acquisition methods become more widespread.

The material on GitHub is laid out rather like an IKEA instruction manual, with separate repos for the control and render software and even .STEP files for 3D CAD packages. So while it’s definitely now possible to build one yourself, it’s actually of more interest as a way to see how Facebook’s engineering team tackled the problem of acquisition fpr 360 video. They do make use of off-the-shelf internals but there are still lots of specially fabricated parts that you can’t just go to B&Q/Home Depot and buy.

There are several different components to the capture system. The camera array itself is made up of 17 Point Grey Grasshopper cameras that offer nine stops of dynamic range at ISO 600, capturing a 2048×2048 image from a 1” sensor using a global shutter set to 30fps. They are pre-focussed during the build of the camera and should be arranged in serial number order on the machined aluminium baseplate.

14 cameras face outwards, mounted with wideangle C-mount Fujinon lenses. These are combined with two downward-facing and one-upward facing fisheyes for maximum coverage. Facebook suggest a 5′ minimum distance from your subject and recommend it’s mounted on a sturdy stand at around head height (between 4’10” and 6’2″ from the ground).

All 17 cameras connect to an external breakout box via USB 3 and then into computer via a PCIe x8 card – necessary to shift the 17Gbps of data that the array generates. Canon, it really might be time to update the C100 Mk II with higher bitrates. Just a thought.

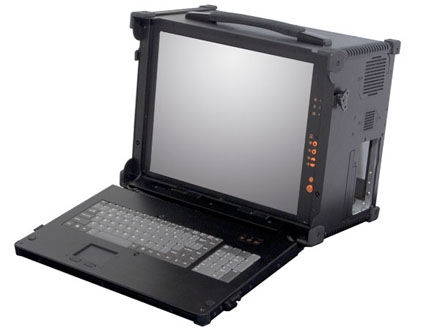

The data stream is routed into a dedicated lunchbox ‘camputer’ (see what they did there) based on the Apollo A1. It runs Linux and Facebook stitching software and although it’s not frighteningly exotic it does have eight CPU cores and 64GB of memory. There’s no dedicated graphics processor in the spec so the heavy lifting of the stitch is all being done by a mainstream high-end consumer Intel CPU chip.

The camputer doesn’t have a lot on onboard storage (a 128GB SSD) so footage is recorded to a dedicated 8TB RAID, which is enough space to capture an hour of material (it’s having to deal with feeds from 17 cameras). I can’t see what RAID mode is recommended, but assuming it’s a sensible RAID 5 for redundancy and speed that should result in around 6TB of usable space. So (maths!) you’re looking at around 100GB/min for storing the raw footage.

Back to the camputer, and the Facebook capture software has a web interface and offers a preview from up to four cameras so you can get your scene framed up. The instructional PDF suggests you might want to either hide the ugly boxes (computer, break-out box and RAID, do keep up) behind something in the scene, or place everything at the base of the camera and remove them in post. Oh, and you’ll also need mains power for everything.

Once you’ve captured the raw footage, another piece of software will ‘process’ your ‘dataset’ and produce a final spherical render – the default resolution is 6K but with 3K, 4K and 8K options as well. Somewhat ominously there don’t seem to be any guide render times. I suspect you might want to set up somewhere near a sofa (or a cofee machine) before pressing Go.

So it’s not quite run-and-gun ready yet but the hardware isn’t as exotic as I’d been expecting – in particular the computing power necessary to control and render the cameras is even a couple of years old at this point, so hardly cutting edge. Although rendering out the final image could, I suspect, happily take as many CPU cores as you can afford to throw at it.

Now, you could in theory build one of these in your shed, but unless you’ve got access to a CNC milling machine, a supply of aluminium, and around $30k it’s a bit unlikely. Still, the ingenuity of the internet knows no bounds, and I for one am looking forward to seeing if anyone actually manages to make use of the instructions to make their own Facebook Surround 360 camera. Let us know if that’s you!